A few days ago, OpenAI launched its Code Interpreter, capable of handling Python programming chores. The interpreter can read files uploaded by users, perform tasks described in the input prompts and, if requested, allow downloads of outputs produced. One can also see the code the platform, uses and download for local replication and improvement. Files bigger than 100k will not make it through, but uploading a series of compresses under such size is possible. Internet access is disabled, so do not try to use any “live” sources. Code Interpreter sessions have a time limit and expire soon after interaction ceases. While you can still see the whole session, rerunning it is not possible,

Data analysis is one of the features the interpreter offers, in addition to file conversion and solving mathematical problems. So I decided to test the former using UNDP’s Human Development Index (HDI) data. The latest 2022 data can be downloaded as an Excel file containing two sheets. Data is in the first sheet. However, its content is designed to match the table layout of the HDI report. As a result, data presentation is messy and needs proper cleaning before using the data analysis surgical knife. As I was aware of this before, I decided to test the Code Interpreter to check how it would deal with messy data files. By the way, you need a ChatGPT plus account (20 USD/month) to use Code Interpreter.

I first asked ChatGPT to read the HDI file I had just uploaded, clean it and provide descriptive stats for numerical variables. The bot quickly learned that the relevant data was in the first sheet and immediately noticed the HDI layout’s issues. It then proposed a way to move forward.

After further cleaning, it also indicated that the number of columns with names (8) did not match the total number of columns in the data. And the latter seemed to contain footnote references for some countries. Indeed, 5 columns include such references, which for data analysis are pretty unsueful. ChatGPT then created names for such columns but then dropped them as they were irrelevant for statistical analysis. It ended by providing comprehensive summary statistics for the eight numeric variables in the HDI dataset.

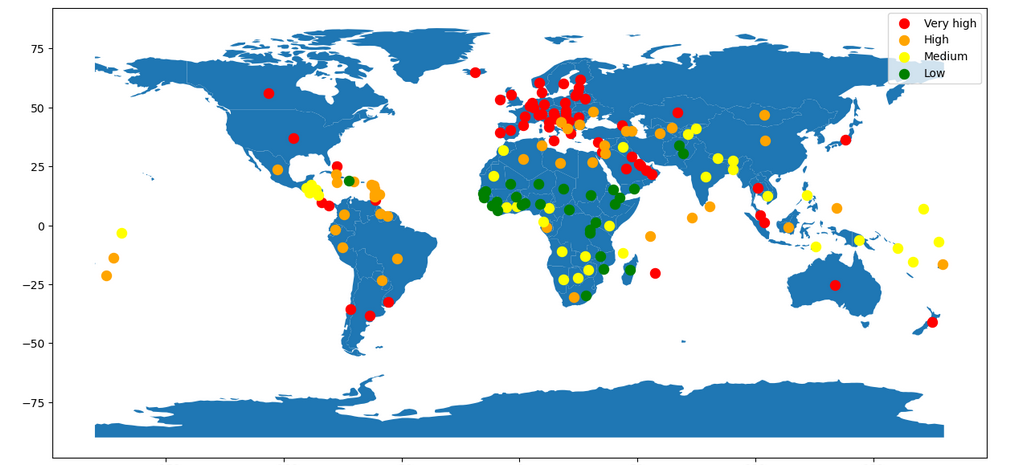

With that in hand, I uploaded a file with country geolocations, shared with ChatGPT the four categories for HDI levels UNDP uses and asked to create a map by HDI levels using dots. That went pretty smoothly and I got the following graph.

However, several countries dropped off the map as the country names used in the HDI data did not match those in the geo-file. Unfortunately, UNDP does not use ISO country codes (contained in the geo-file I uploaded) to avoid this problem. And since Code Interpreter is not connected to the Internet, it could not even try to address this gap.

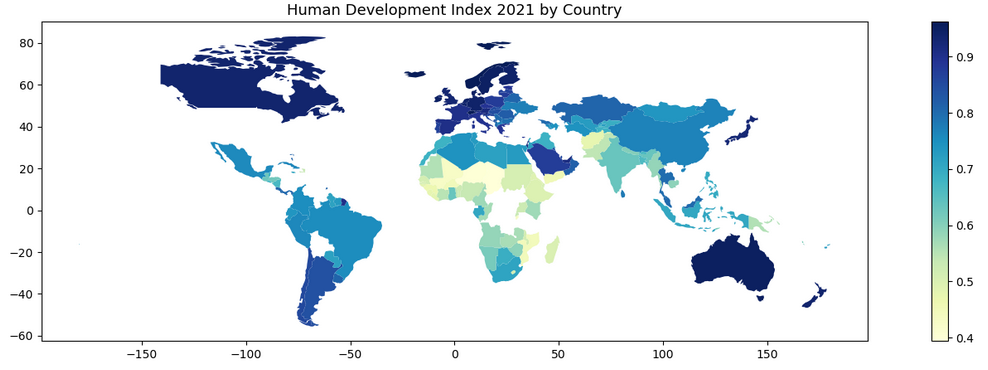

The above map is not wrong, but it can undoubtedly be improved. So I asked the bot to fill every country with its HDI value.

Looks cool, but here we lost a few additional countries, the US included. UNDP uses “United States” as its name, but most other data set use “United States of America.” Country name mismatches can be easily solved by adding ISO codes to the HDI data. You can also ask Code Interpreter to do that, provided you upload such data to your session. Or you can download the Python code from the site and do the corrections and fine-tune locally.

In any event, doing all of the above took less than five minutes. And while I did not have to write a single line of Python code, my productivity increased substantially. But the real benefit of Code Interpreter for data buffs is not just that. Instead, they can instead ask the platform to do the essential leg work and instead concentrate on more sophisticated tasks. The tool might be beneficial in reducing data cleaning times and thus eliminate many of the poorly paid jobs taking care of that business nowadays.

However, if you are not a data scientist or a programmer, Code Interpreter might not be that useful. I do not think one can learn Python this way. On the other hand, if you are a beginner in such areas, Code Interpreter will be a very powerful tool to advance your skills, compete with those with more expertise and thus allow you to charge reduced rates for your work to increase your market share. I predict an overall decline in data science and programming salaries and consultancy fees, thanks to ChatGPT.

I am sure OpenAI competitors are preparing their own interpreters that might be even more powerful than this one.

Raúl