Yesterday

I accidentally learned how to master computer programming. I was finishing high school and faced the daunting decision of choosing a study field. As my father was an engineer, I leaned toward the same area. However, my passion for music dominated my then-minimalistic environment. I was thus more inclined to go for electronic engineering—not civil, my Dad’s profession. However, the National University, which required passing a very competitive admissions exam, did not offer such an option. So, for reasons I do not recall, I ticked the mechanical engineering option in the application form.

I made the cut and secured my place. I soon discovered that all future engineers shared the same curriculum for the first two academic years, except for a few courses. Mechanical engineering had a series of very cool workshops that taught us to use and maintain tool-making machines. We proudly felt part of the working class for a few hours a week. Otherwise, calculus, physics, advanced mathematics, statistics, descriptive geometry, and technical drawing demanded more time than we could ever have found. And, of course, computer programming, starting the very first semester. That was probably the easiest, at least for me.

My first program was not sorting a few data lines, as computer textbooks usually suggest. Instead, I was asked to write a program that solves the integral for a fraction with a single variable. Not really rocket science if one is familiar with elementary integral calculus. FORTRAN was the programming language the University recommended for engineers. It ran on an IBM System/360 mainframe computer. Unfortunately, terminals were scarce at the time and, when available, only accessible to computer center staff. Consequently, we had to write the code by hand and type it into punched cards via also scarce keypunch keyboards only available at the computer center. The staff then fed the coded cards to the mainframe via a card reader. Typing errors were pervasive, and checking the cards for typos was challenging. Retyping cards with syntax errors caught by the mainframe was frequent. Student programs were run overnight every 24 hours, so success could take a few days, while assignment deadlines were always cast in stone. The fact that my typing skills were close to zero only added insult to injury. In this light, jotting down the FORTRAN program on my paper notebooks was the easy part.

A couple of years later, I finally discovered that engineering was not my thing. Machines, tools, and programming were cool, but I was now much more interested in people and rampant social issues in a country where gigantic socioeconomic gaps were impossible to ignore. I was more than eager to understand the reasons for such a precarious situation. So I did a 180 and decided to become a Sociologist instead, a decision my father was not too excited to hear, of course. He told me so but never asked me to reconsider or pushed me to change my mind. I will always be grateful for that, as I was walking a fragile tightrope, on the verge of falling momentarily.

Ten years later, I was already in New York City trying to decide if I should pursue a Ph.D. in economics, having already secured a Master’s degree. The “PC Revolution” was just starting, and IBM had recently released its PC/AT model, which seemed as powerful as the now-old and probably defunct IBM 360. PC-based academic computer centers were popping up everywhere, and my college was not planning to be left behind. The institution did not offer engineering degrees, so students with such a background were in high demand. In addition, professors working on mathematical economics found the PC and Lotus 1-2-3 combo ideal for running much-sought non-linear differential equations simulations relatively cheaply. Unfortunately, such simulations took a long time to complete. We usually had to leave the PC running overnight and patiently wait for the expected results. Local area networks to share printers and other devices were also new. And a few years later, the academic Internet arrived. Demand for our skills was more than sustainable in the long run.

I found myself doing plenty of computer-related work, thus securing much-needed income that helped cover some of my daily expenses, then minimal. Indeed, computer programming opened many hidden doors for me, something I never expected nor was in any of my multiple and unrealized career plans. I suppose I was in the right place at the right time to use a cliché. Over the years, I taught myself new programming languages such as C, C++, and Java and scripting languages such as Perl and PHP when the “Internet Revolution” was exploding. I also learned SAS and SPSS and mastered many econometric software packages.

I also discovered that programming was just like riding a bike. One never forgets how to ride one, no matter how long since the last time. One might be a bit rusty initially, but soon, we will be masters of the domain yet again. I had not written a line of code in over a decade, but there I was in the middle of the many tech revolutions, using skills I thought I had forgotten forever. A decade later, I had a full-time job in international development, and programming was not essential to the work. By the end of the Millennium, I was hiring programmers to write code for some of our development programs in the Global South. My comparative advantages vis-à-vis other managers were that I could discuss software specification requirements with the coders, read their code and check for quality, thoroughly test the various versions, and suggest detailed code modifications.

I would argue that programming has changed quite a bit since I started. But then again, it has not changed much at all. Indeed, a vast transformation occurred in learning computer programming thanks to the advent of graphical environments and the emergence of the Integrated Development Environment (IDE), which expedited productivity and lowered entry barriers. In fact, one could become a savvy programmer without having to embark on a computer science career. The “Mobile Revolution” and the emergence of the App reinforced this trend while massifying access to programming tools. For the most part, Apps require fewer lines of code than similar enterprise or web applications. For example, Google search allegedly comprises two billion lines of code, while Microsoft Windows has over 60 million. Apps are tiny in comparison and thus relatively more straightforward to code.

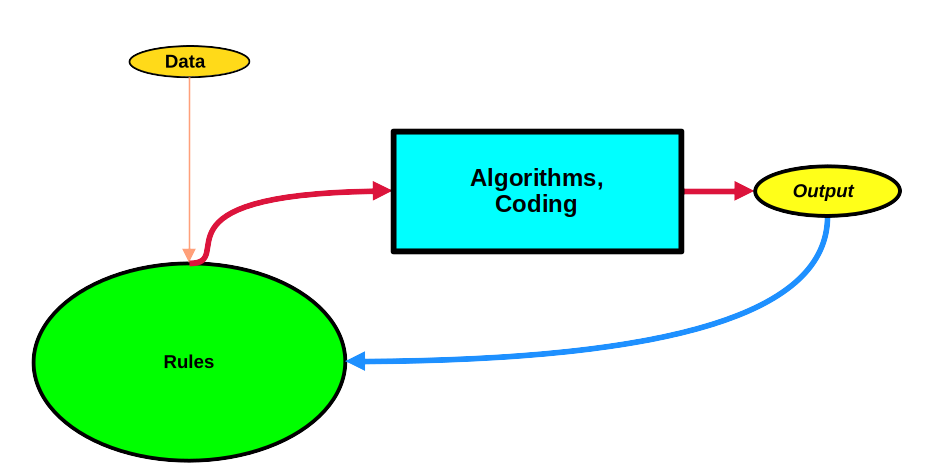

Regardless, what has not changed until recently is the overall structure of traditional computer programming, which I depict in the figure below.

The central idea is to take an input, data, preferably in small quantities, and activate the programming magic wand to generate the expected output. If the latter is not what we were looking for, the magic wand must be set in motion again until the target is hit dead center. As a programmer, I write the rules and use existing algorithms to achieve results. In the case of my first program, for example, I had to write the algorithm to perform the integral of the fraction used as input. But I also had to ensure that the input was a natural number (not a real one, letter, word, etc.) and that it was not very large, given the limited mainframe memory and computing architecture. I also had to write code to display the output in the best way I could. In fact, I spent more time writing rules to check the input thoroughly and display a user-friendly output than jotting down the code for the actual integral. This algorithm was probably already part of the mainframe’s computing library but was not available to me as a student. Today, one just needs to go to a website and solve any integral online. We have come a long way, indeed.

Rules take the shape of subroutines, functions, classes, etc., and algorithms. My job as a programmer is to ensure I cover all the bases and always get the desired output. But untameable bugs are always around, usually difficult to exterminate once and for all. There is always room for improvement, too. In large-scale programs such as Google Search, a team of programmers is a must for both development and maintenance. Recall that Google’s search algorithm was published in 1998. It was not comprised of millions and millions of code lines. It has grown almost exponentially throughout the years. While we cannot see it as it is not Open Source, I am positive it has a vastly improved search algorithm and many rules to cover all potential gaps and pitfalls.

Raúl