Disruptive. One of the attributes most often used to describe, in minimalist terms, the potential impact of new and emerging information and communication technologies (ICTs) in society. While its actual meaning can vary from one person to another, disruption is usually linked to dramatic short-term change, in which old and obsolete technologies, processes, and institutions—not to mention people—will be either replaced or purged altogether, all for the best.

Disruption is thus implicitly connected to the concept of progress, especially to its linear version. Here, progress is seen almost like time is in physics: it always goes forward, and it is impossible to go back and edit the past. Recent research has challenged the linear conception of progress1 See, for example, Amy Allen’s book, The End of Progress, for an overview of the issues surrounding progress from a critical theory perspective. but this has yet to permeate the predominant perception.

No one will dispute the idea that ICTs have “progressed” by leaps and bounds over the last 25 to 30 years. But what about its social impact? After all, during that same period, we have seen inequality grow rapidly in most, if not all, countries. Surely, correlation does not mean causation. Nonetheless, we probably do need to revisit the idea of progress. Perhaps more relevant for us practitioners in ICTs and human development is whether we can use technology to compellingly tackle inequality. Technologies such as Artificial Intelligence, Machine Learning, and Blockchains could indeed be leveraged to address the challenge. Is this happening on the ground?

The recent book by Virginia Eubanks, Automating Inequality, suggests a positive response. However, the impact has so far been mostly negative. That is, new smart technologies are being used by local governments to monitor and control those eligible for state assistance—mostly the poor—and eventually disqualify them from receiving such benefits based on “objective” data and “impartial” algorithms.

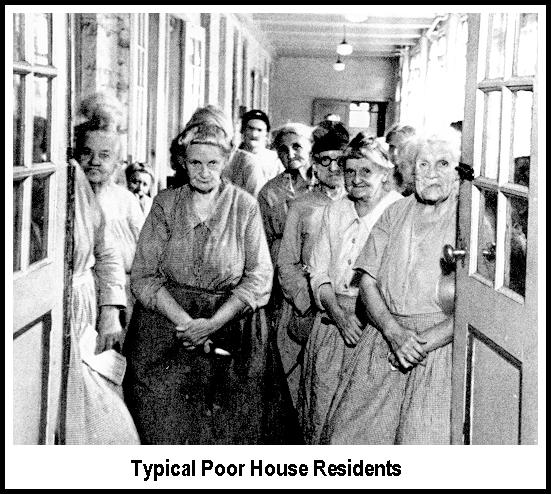

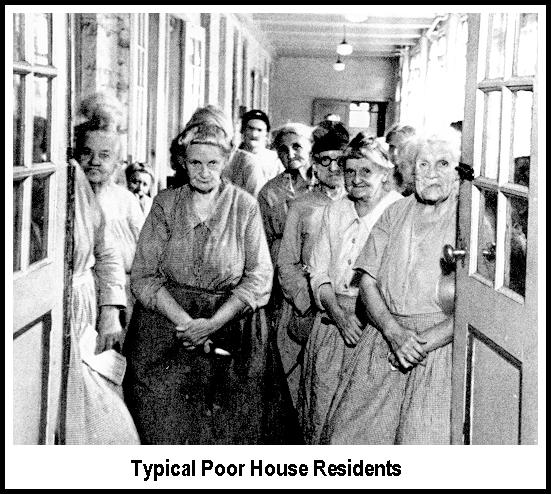

Eubanks traces the historical evolution of poverty assistance in the US to the first poorhouses in the 17th century. Poorhouses became the primary mechanism for poverty assistance in the early 19th century. However, this began to change after the 1873 economic crisis and the 1877 general strike. While Europe back then laid the foundation of the welfare state, the US opted for “scientific charity,” aiming to ensure that only those who truly deserve assistance receive it. Alleged “free riders” could be filtered out of the system and punished accordingly. This approach required both better methods and data, and ultimately gave rise to the function of casework and its human incarnation, caseworkers.

The 1929 Great Depression brought additional changes. In a nutshell, the New Deal introduced the distinction between social insurance and public assistance. Unlike the former, public aid was directly controlled by states and municipalities, free to add the controls and limitations they deemed necessary for disbursing public resources. This ended up reinforcing the “scientific charity” approach targeting, in particular, the so-called “working mothers.”

Computers were introduced in the 1970s when the demand for public assistance was at its peak in several key states. Louisiana, the first state to push the concept of the “employable mother,” which excluded, by default, most African American women, was also the first to deploy new technologies. But the rate of technology deployment to “disrupt” public assistance was very uneven. In the end, technologies ended up supporting, not disrupting, the long-term policies and ideas that triggered public assistance in its current form and the overall stigmatization of the poor.

Eubanks closely examines three cases to support this point: 1. Indiana’s successful efforts to restrict and reduce public assistance via automation and privatization. 2. The Los Angeles County management information system to record and manage the homeless. And 3. Pennsylvania’s Allegheny County predictive analytics platform, which tries to guess the future behavior of the poor based on past recurrences, including actions by all those who happen to surround them. These systems, in fact, have created the new digital poorhouses.

However, the digital poorhouses are more ominous than their analog predecessors. The former are scalable, obscure, difficult to change, and seemingly eternal, all thanks to the very nature of the technology being deployed. Moreover, these systems build on the idea of “rational discrimination,” which, in essence, assumes that bias does not exist. We could link this idea to the popular notion that technology is neutral and thus unbiased. If the algorithm gives a specific output or recommendation, it is clear that it is not coming from a disgruntled, biased bureaucrat. Instead, it is the result of a supposedly scientific procedure that we average humans cannot really argue against. The computer is never wrong.

Eubanks ends the book by proposing an “Oath of No-Harm for an Age of Big Data.” She also revisits Martin Luther King’s Poor People’s Campaign, which failed to deliver the goods after his assassination, and suggests a restart to dismantle the digital powerhouse for good. Some groups are already working along these lines, but more grassroots support will be needed to ensure success in the medium term.

When it comes to public assistance in the US, progress is indeed not its most outstanding characteristic. Eubanks does not really deal with additional technologies such as artificial intelligence and machine learning, which, by now, have become controversial in terms of scalability, obscurity, persistence, and bias.

Technological progress is undoubtedly linked to the Enlightenment and its stern endorsement of rationality as a way to free human beings from the dictatorship of nature and of other humans. This, however, does not seem to be happening here at the moment.

Cheers, Raúl

Endnotes

| ⇧1 | See, for example, Amy Allen’s book, The End of Progress, for an overview of the issues surrounding progress from a critical theory perspective. |

|---|

Comments

2 responses to “Algorithmic Inequality”