Category: Artificial Intelligence

-

Sizing up Big Tech – III

In the early 1980s, when Apple was still an underdog facing stiff competition from larger and well-established tech companies, it masterfully used mass advertising to challenge their dominance. TV and paper news media were the only options available at the time. Perhaps Apple’s most famous ad was 1984, launched in 1983, announcing the upcoming Macintosh…

-

Sizing up Big Tech – II

As the EU Digital Markets Act (DMA) acknowledges, Google operates in various markets, including operating systems, devices, email, browsers, broadcasting, cloud services, mobile apps, advertising, and search. In some cases, such as search engines and mobile operating systems that are provided “free,” the company has disproportionate world market shares (90 percent and 73 percent, respectively),…

-

Sizing up Big Tech

Have you ever bought something from Google? Many will likely answer positively—perhaps a tablet or a phone, or possibly a subscription to YouTube or Google One. Back in the early 2000s, most would have responded negatively, though. At the time, the company was synonymous with robust, mostly accurate web searches, and no one was even…

-

Sandbox Quicksand

Breathtaking digital technology innovation has been a hallmark of the past 30 years, triggering dramatic, albeit not always beneficial, social change that hardly anyone could have predicted or even imagined. Unlike previous technological or industrial revolutions, digital innovation unfolds endlessly, reaching nearly every corner of the globe at nearly the speed of light. Indeed, no…

-

Digitalizing Sovereignty – V

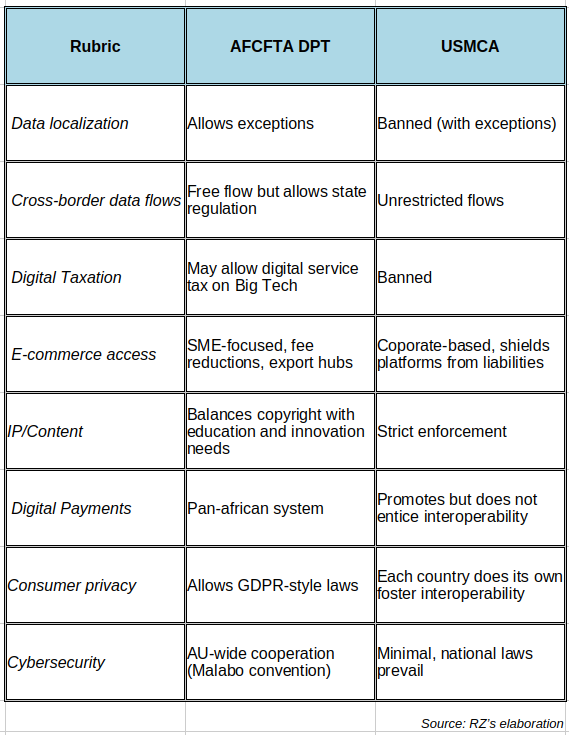

A recent blog tackles the issues surrounding Africa’s digital sovereignty. It first defines sovereignty as a country’s capacity to independently create, develop, and govern AI. However, the supreme adjective I previously highlighted is missing from this definition. At any rate, the authors rightly emphasize that sovereignty comprises technical and political issues. On the technical side,…

-

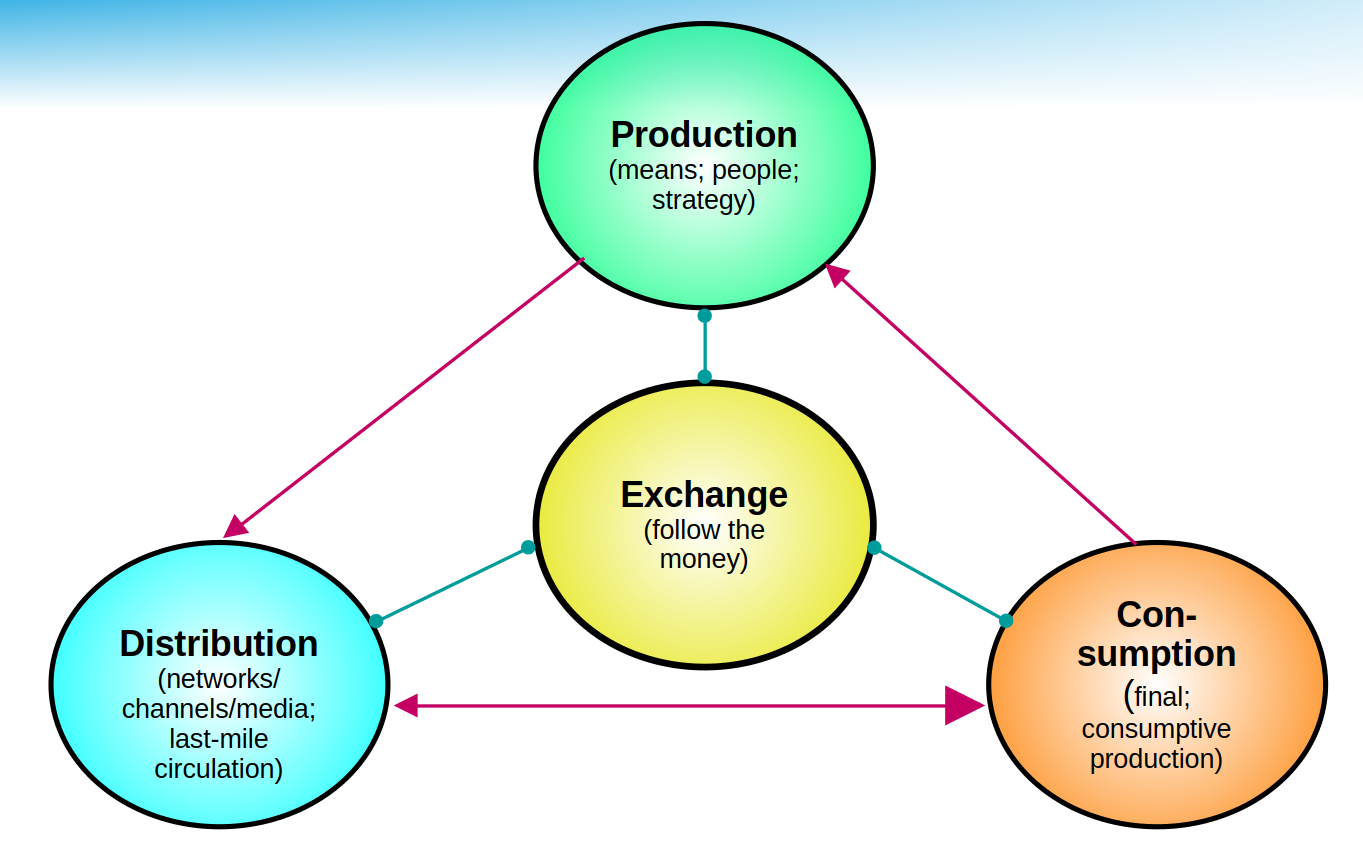

Digitalizing Sovereignty – IV

In 2006, a British mathematician running a customer-centric data science company coined the phrase “data is the new oil.” At the time, the Open Data movement was taking its first baby steps, while the expected digital data tsunami was gathering steam expeditiously. The saying was meant to pinpoint that data, like oil, needs to be…

-

Digitalizing Sovereignty – III

Like governance, sovereignty has existed much longer than the modern digital universe. Thus, it has a rich history evolving over several centuries (see references below). Historical consensus holds that Jean Bodin was its conceptual father in the late 16th century, when the scourge of religious wars dominated Europe. Treaties such as the 1598 Edict of…

-

Digitalizing Sovereignty – II

Back in 1993, I was leading a global program‘s developmental and technical components to connect developing countries to the Internet. Its core goal was to spread information on sustainable development following the UN 1992 Earth Summit agreements. The program started with a pilot in ten countries and eventually expanded to 50 across all regions. As…

-

Digitalizing Sovereignty – I

A few months ago, I landed at New York’s JFK airport. Fortunately, my flight went smoothly and arrived on time in the early evening. After a long walk to the immigration booths, the cue lines were also extensive, as expected. Usually, arriving passengers are split into citizens, residents, and visitors (previously categorized as “aliens”). Diplomats…

-

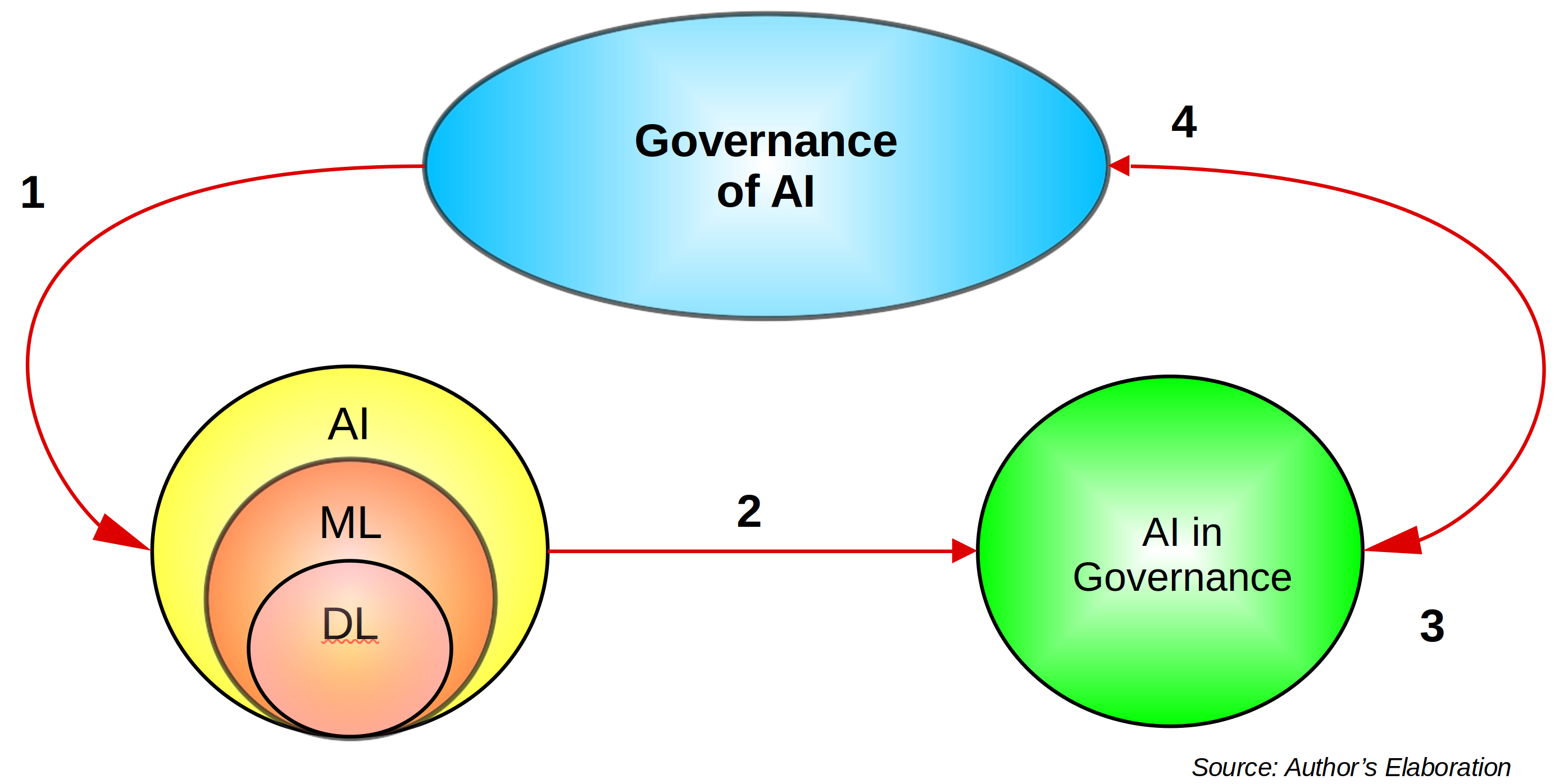

AI in the Public Sector – III

Needless to say, digital technologies are not strangers to the public sector. Indeed, Digital Government (DG) has been the subject of extensive academic research that has showcased frameworks, opportunities, and failures, including in the Global South. Initially born as e-government, the use of Information and Communication Technologies (ICTs) in and by the public sector has…

-

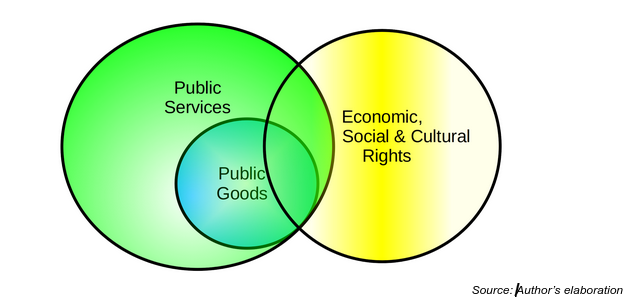

AI in the Public Sector – II

While competing theories on public services exist, two of the most relevant deserve special mention. In one corner is the French conception, which stems from the French Revolution and directly links public services to the state within a rights-based framework. It is thus very close to the Covenant on Economic, Social and Cultural Rights. On…

-

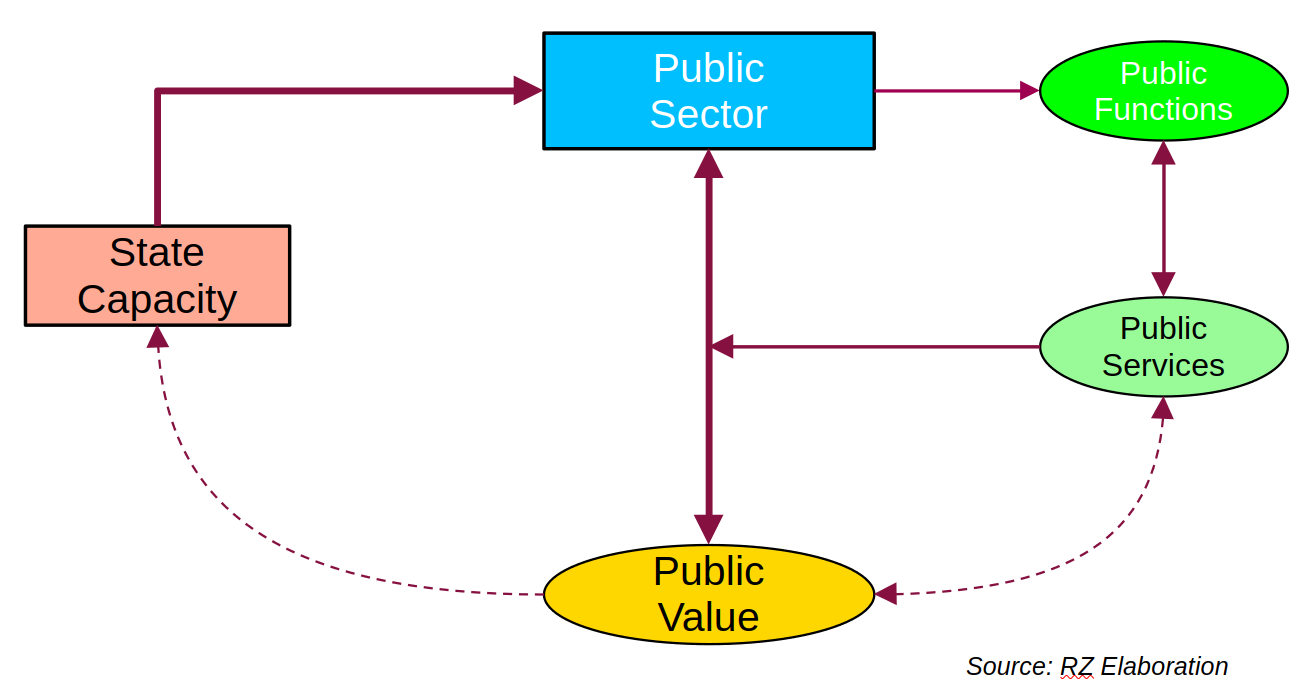

AI in the Public Sector – I

Historically, the public sector has not been a leader in deploying digital technologies. In fact, it is usually a step or two behind other sectors, including civil society organizations. Reasons for such a predicament go beyond bureaucracy and, in numerous instances, are linked to legitimacy, transparency, and accountability. After all, spending public resources responsibly demands…

-

The Information Disorder: A Critique – III

As previously suggested, the three core information categories proposed by the Information Disorder Framework (IDF)—disinformation, misinformation and malinformation—are not orthogonal. Indeed, a given message can change from one to the other as it rapidly flows through the Internet pipes, trying to feel at home in noisy, warm data centers. Clearly, what to an “evil” agent…

-

The Information Disorder: A Critique – II

While disinformation has been comfortably living in the beautiful and diverse geographies of the Global South for many decades, its grandchild, digital disinformation, is undoubtedly much younger, with not a single white hair yet visible. Indeed, digital disinformation is a 21st-century phenomenon, propelled by the emergence of the Internet and the seemingly unavoidable rise of…

-

The Information Disorder: A Critique – I

Disinformation has been an almost constant threat in the Global South, haunting middle and lower-income countries for decades, if not longer, before it suddenly exploded in advanced democratic regimes. In a previous post, I recounted my daily experience of swimming in a vast ocean of disinformation. Some researchers have argued that colonialism was one of…