Category: Economics

-

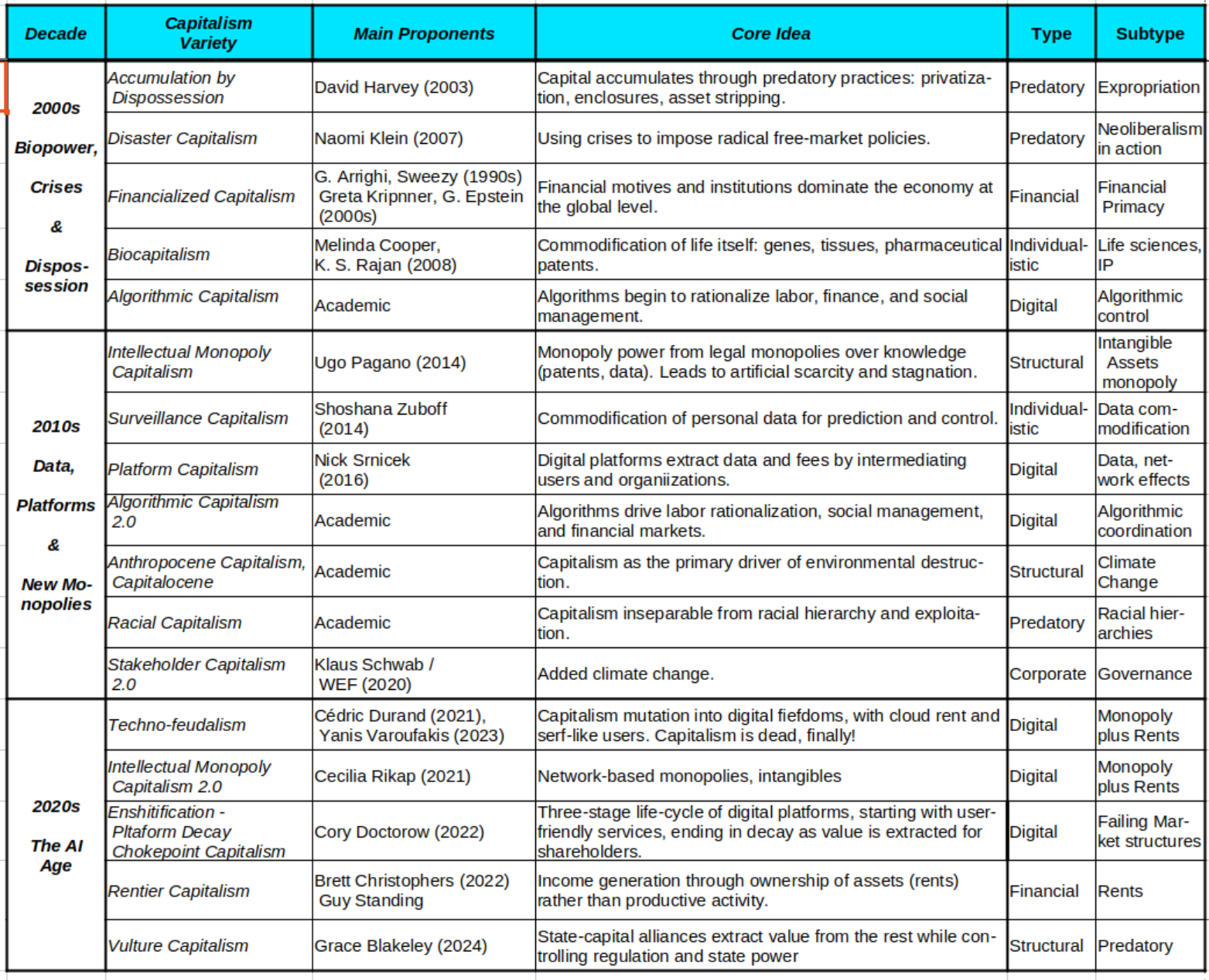

One Capitalism Too Many

For those of us who have been riding the socioeconomic roller coaster of digital technologies for years, the word “revolution” has lost much of its meaning. Since the advent of the PC (personal computer, just in case) in 1981, we have had no choice but to hear it ad nauseam. The PC revolution was then…

-

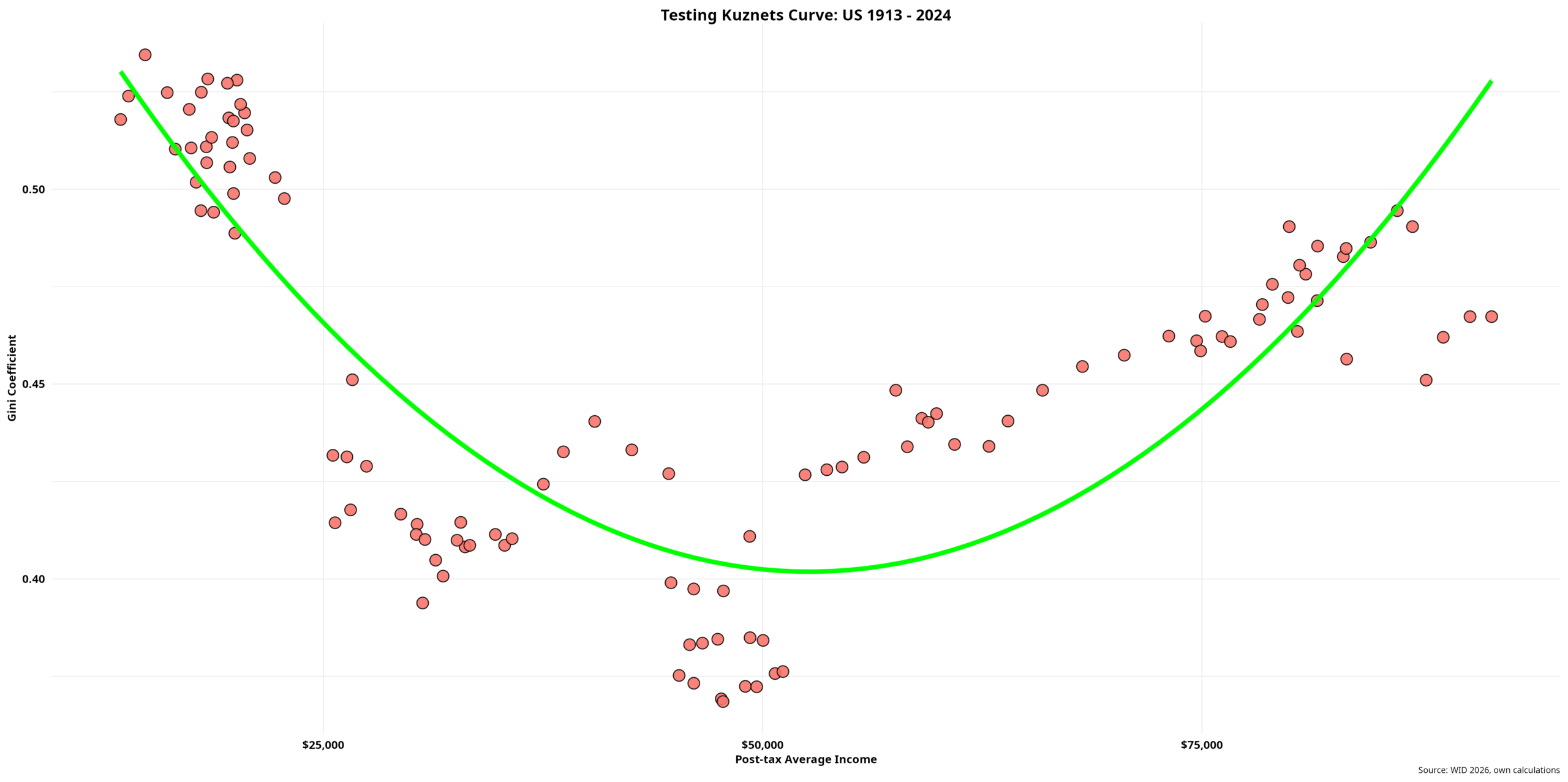

Conspicuous Inequality – IV

Development Economics Kuznets’ incessant empirical drive prompted him to dive into developing countries, seeking additional data to further test his long-term hypothesis on income inequality. As we saw in the previous post, the scant data available for a few developed countries only showed declining inequality matched by increased economic growth. Evidence regarding trends in inequality…

-

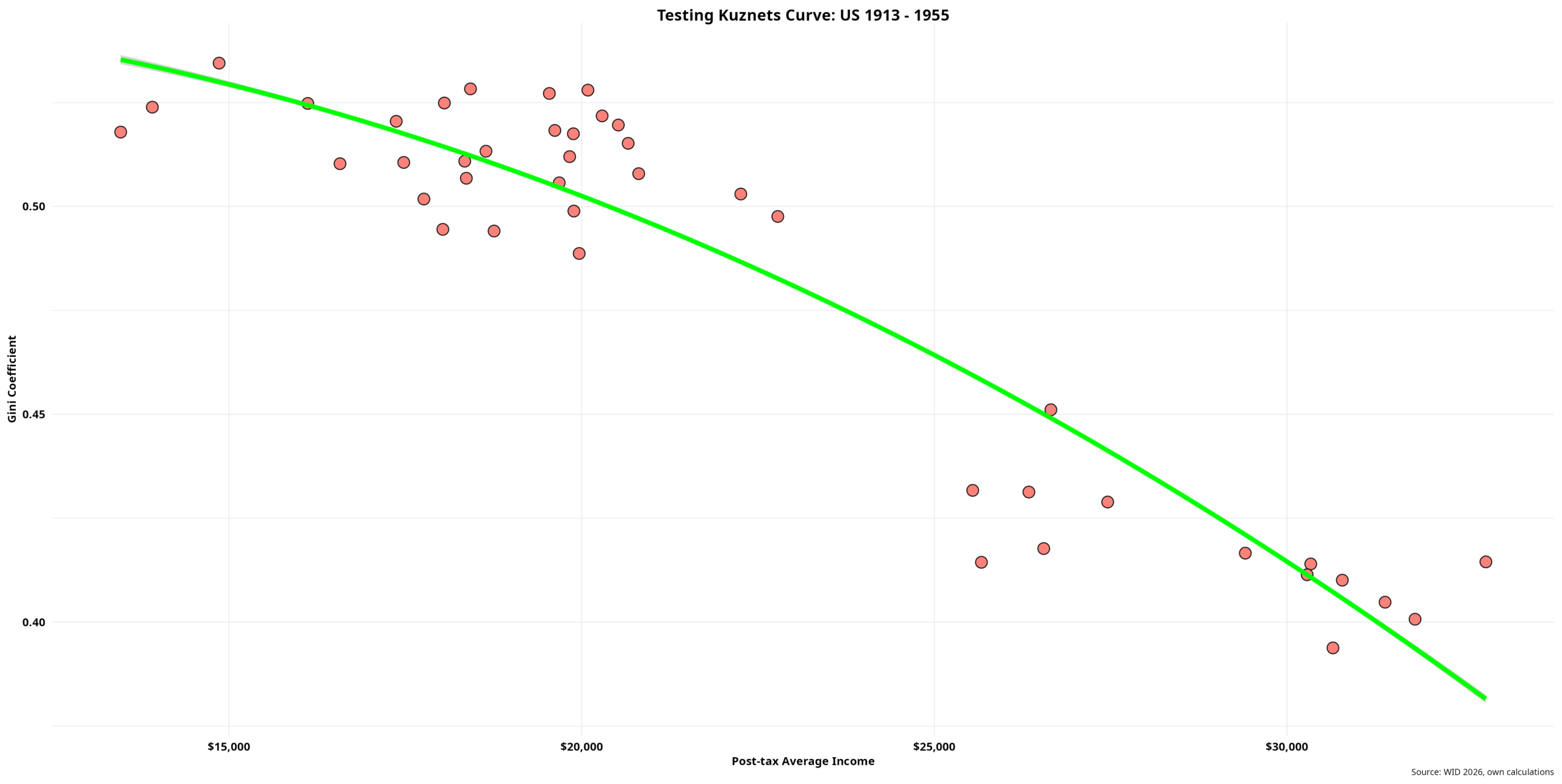

Conspicuous Inequality – III

Back to Kuznets A cursory overview of Kuznets’ personal and academic background reveals his distinct approach to economics, in contrast with the marginalist school. Having migrated to the U.S. in 1922, he then completed his Ph.D. in economics at Columbia University four years later under the supervision of W. C. Mitchell. Mitchell, one of the…

-

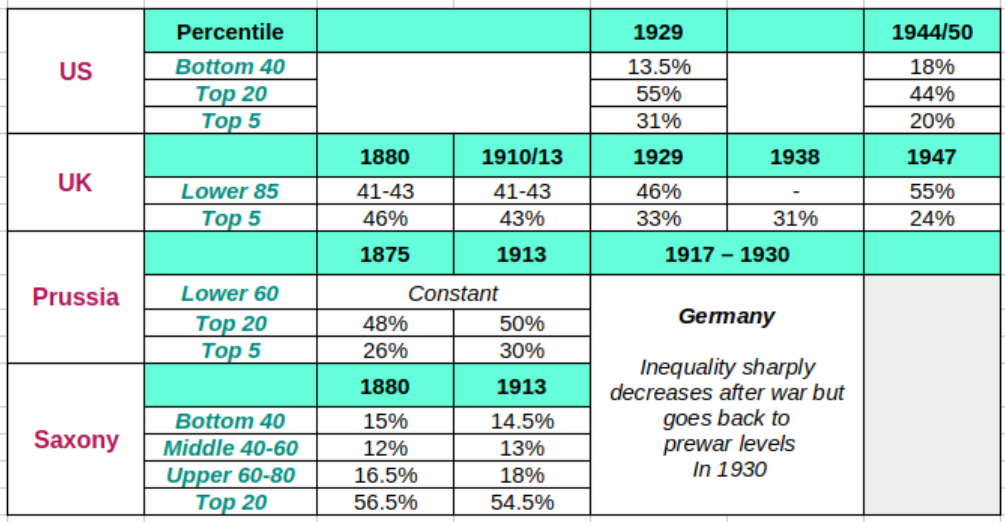

Conspicuous Inequality – II

Brief History of Inequality Since the earliest days of capitalist development, inequality has captivated policymakers and researchers alike. By the time Kuznets penned his influential article, a lot of water had gone under that bridge. The Physiocrats and primarily Classical Political Economy—spearheaded by Adam Smith—deserve particular mention. They placed inequality at the very heart of…

-

Conspicuous Inequality – I

Roaring Crisis Among the many ripple effects of the 2008/09 Global Financial Crisis, two interrelated outcomes stand out as perhaps the most unexpected. First, the so-called “Marx revival” was made possible by the deep global crisis, which strongly corroborated his argument that capitalism is inherently unstable. Mainstream economists, who adore steady states and equilibria, lack any…

-

Brilliant AI Chatbots

Just over a decade ago, we could not stop talking about surveillance. Indeed, the 2013 Snowden files revealed a series of spook technologies that most people had only seen in largely mediocre Hollywood films, always with a reference to the Stasi or “communism,” of course. So-called “democracies” will never do that was the implicit, subliminal…

-

Commoditizing Digital Sovereignty – III

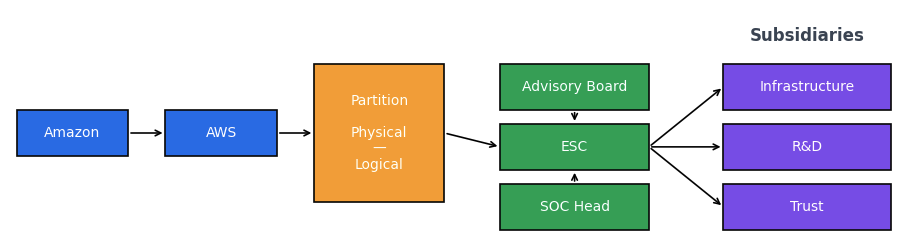

Undoubtedly, ESC is a sophisticated proposition—clearly the result of extensive thought, careful planning, and considerable groundwork, both operational and political. It is also important not to overlook the substantial capital and expertise cloud corporations bring to the table. Let me begin with AWS’s corporate strategy. Creating a new “parent” company with Amazon as its own…

-

Commoditizing Digital Sovereignty – II

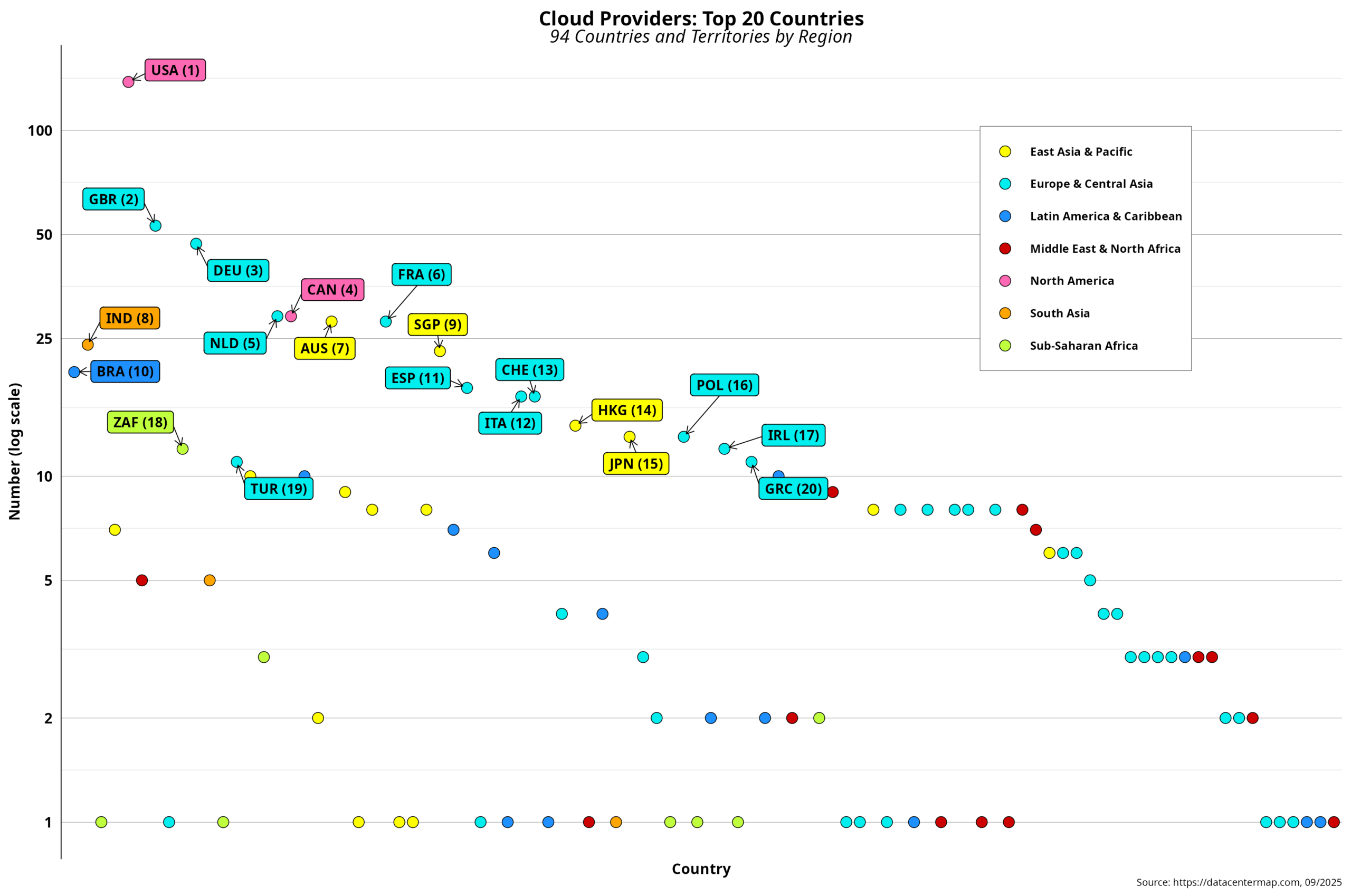

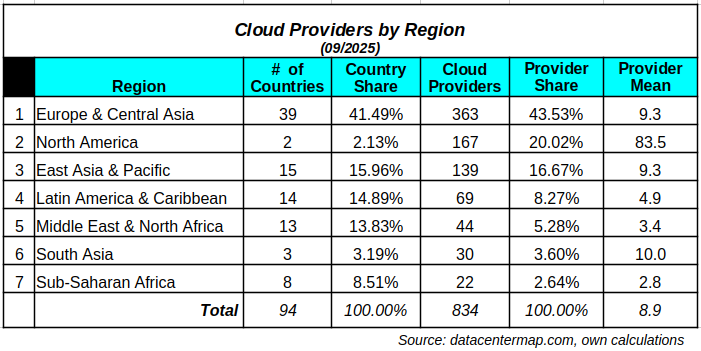

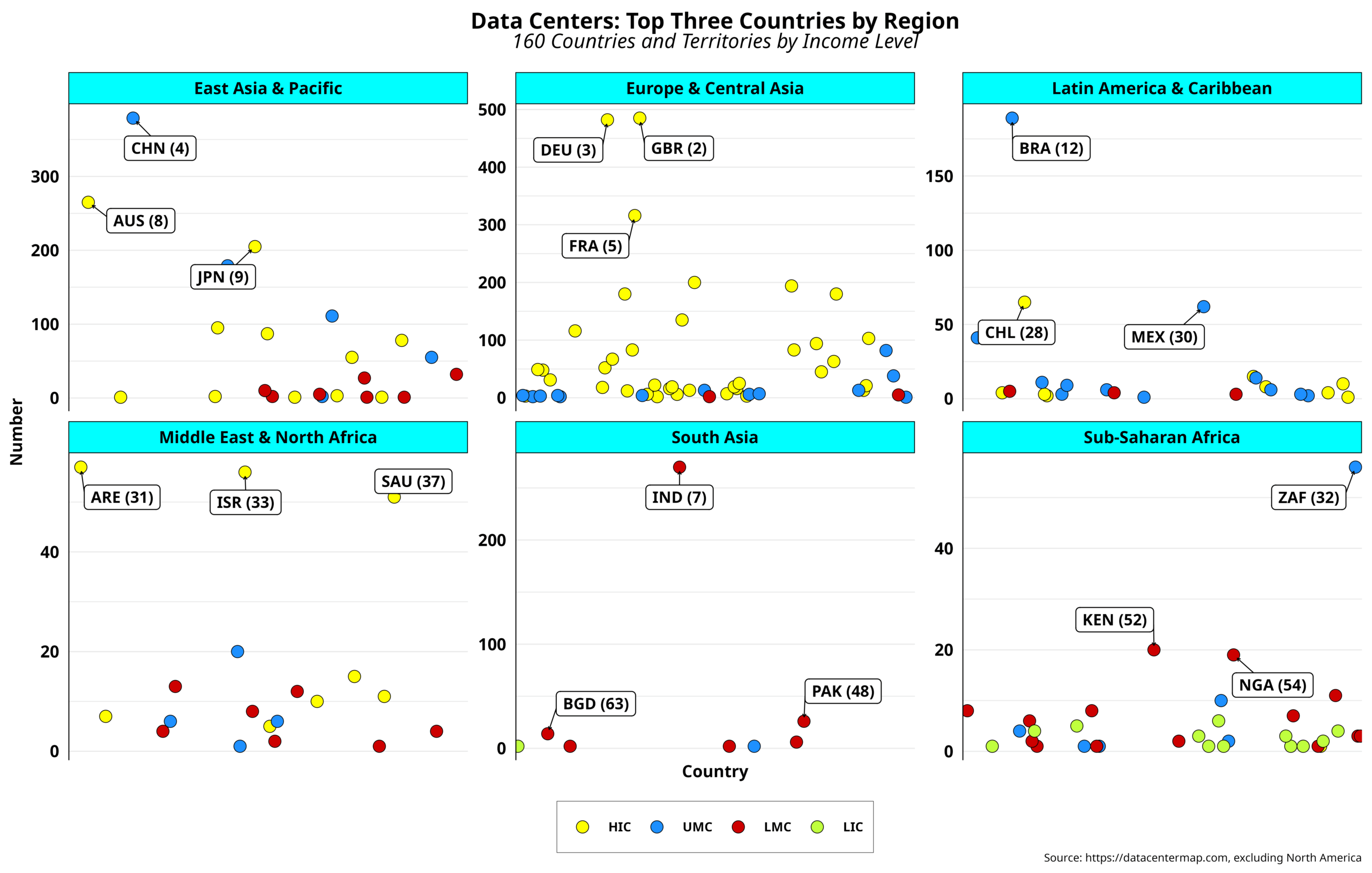

Research I recently undertook showed that the cloud sector is large and unequal. Reliable estimates indicate that a few thousand cloud firms operate globally. At the same time, the top three suppliers (Amazon, Microsoft, and Google) together account for over 60 percent of the market. While the latter figure might be overestimated according to some…

-

Commoditizing Digital Sovereignty – I

Perhaps one of the most poignant features of capitalism is its unparalleled capacity to transform almost anything into a commodity. Indeed, everything is for sale, and a price can be tagged on. Included here are tangibles and intangibles. Simplifying, it can be argued that historically, such transformation started with tangible goods in agriculture and manufacturing…

-

Clouds in the Cloud – II

Talk about an ongoing AI bubble seems to be getting louder by the minute. As previously mentioned, estimates indicate that by 2030, close to 7 trillion USD will be invested in the sector—assuming the bubble will not burst in the interim. Most of this massive investment is expected to go toward digital infrastructure, led by…

-

Clouds in the Cloud – I

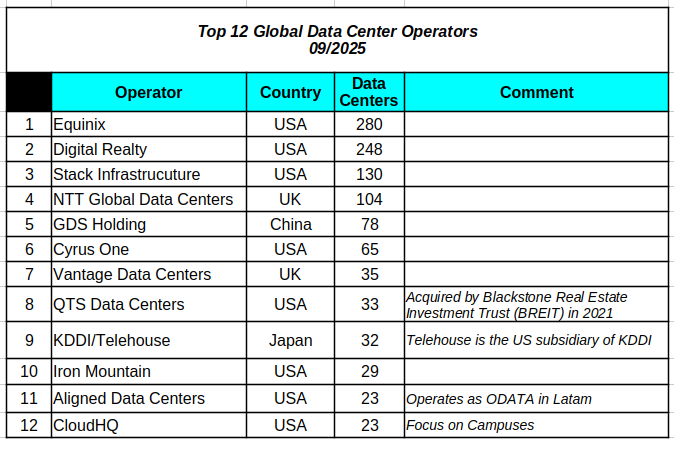

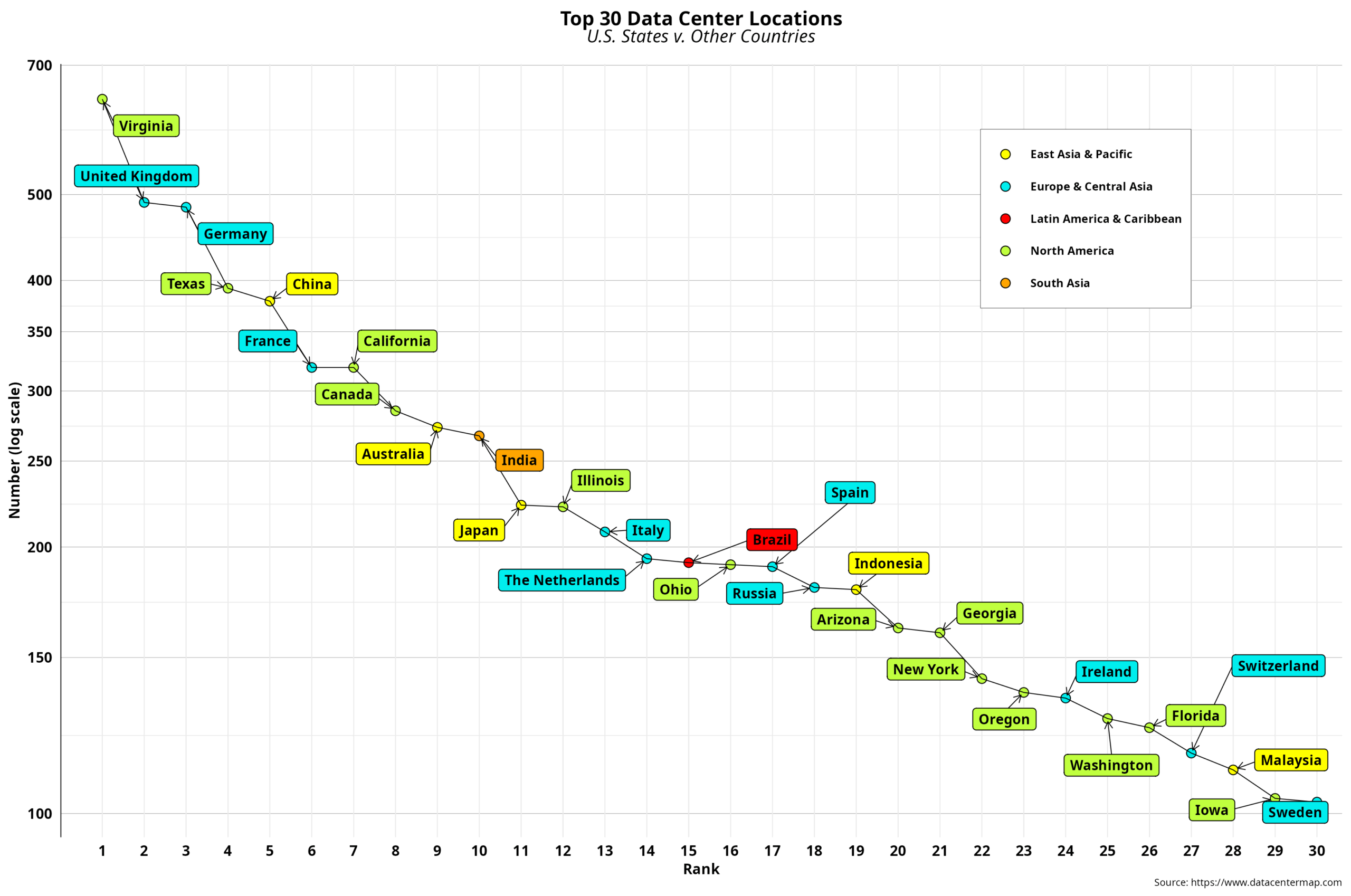

Now that we have a clearer picture of the uneven distribution of data center locations, we can take a closer look at cloud providers. The first step here is to conceptually differentiate between data centers and cloud providers. Recall that the business literature states that cloud providers are one of the five types of data…

-

Data Center Resistance

Two weeks ago, Mexico announced a 4.8 billion USD data center investment sponsored by CloudHQ, one of the top 12 operators globally by number of sites. The target city is Querétaro, the leading Mexican data center location with 19 facilities. Querétaro is also among the top 15 developing-country towns in the data center race. The…

-

Data Center Centralization – IV

The last time I visited Eswatini was in late 2019, three months before the COVID-19 pandemic almost brought our collective imagination to a halt. Digital government support was the excuse for the business trip. I had been there a couple of times before and was thus familiar with the territory. Elections held the previous year…

-

Data Center Centralization – III

Country data typically provides a comprehensive macro overview of the current status of a particular theme, such as data centers in our case. The same goes for CO2 emissions, for example. On the other hand, countries are geographical abstractions that posit the topic within well-defined boundaries, where a national state exercises its sovereignty to the…

-

Data Center Centralization – II

By now, we are aware that data center deployments have a clear geographical bias. Indeed, one region is significantly ahead of the rest. Those comprised of mostly industrialized countries follow, albeit at a considerable distance. The rest can hardly breathe. In any case, such patterns could serve as the first clue about which areas are…